This task can be performed using LangWatch

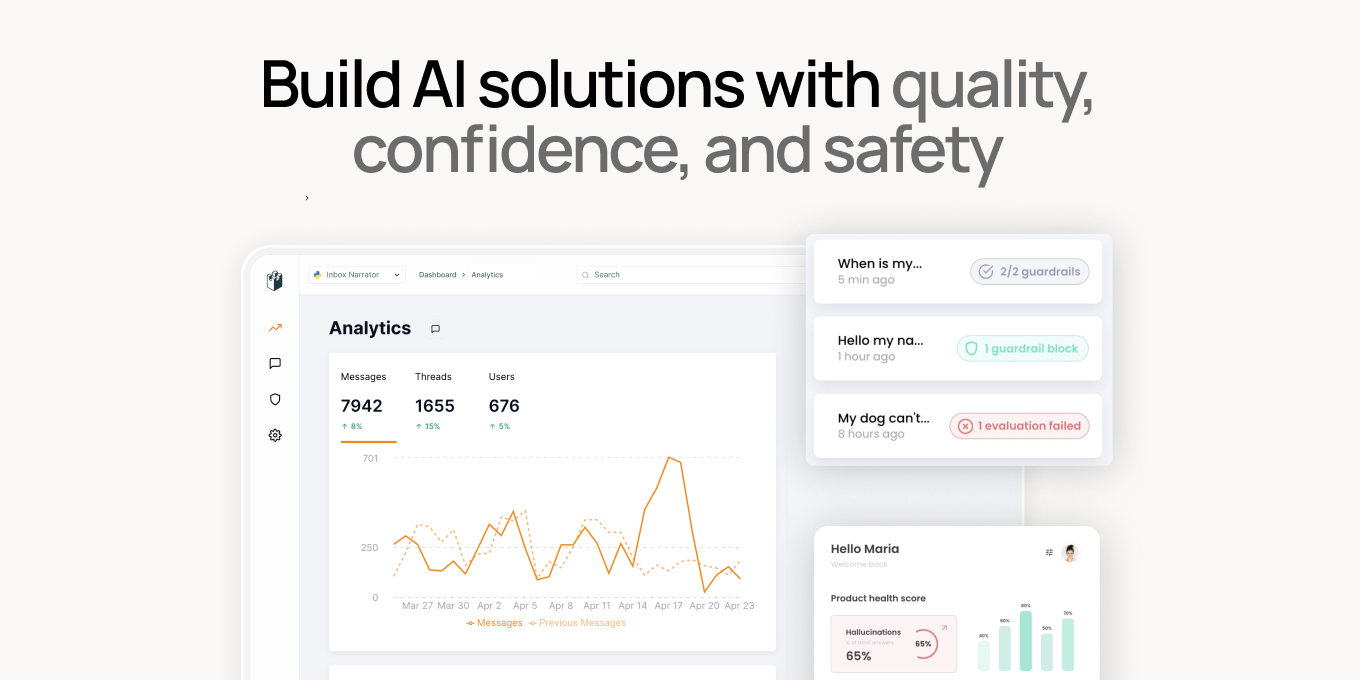

Build AI solutions with quality, confidence, and safety

Best product for this task

LangWatch provides an easy, open-source platform to improve and iterate on your current LLM pipelines, as well as mitigating risks such as jailbreaking, sensitive data leaks and hallucinations.

What to expect from an ideal product

- Monitors LLM interactions to detect and block sensitive data leaks

- Offers tools to train LLMs to recognize and ignore sensitive content

- Regularly updates and refines filters to prevent jailbreaking attempts

- Provides detailed reports on LLM behavior for better oversight

- Easy integration with existing LLM setups for seamless protection